During my thanksgiving-induced food coma, I had run into a problem in my mind. Recall that we had begun to think about right and wrong, just and unjust, should and shouldn’t, could and couldn’t, could do to and could do onto, etc. in an abstract mind conception of the Action Space. Roughly speaking, with the rigor required at the outset of Nichomachean Ethics, Action Space is a set of all possible actions anybody or any body could do to another at a specified time. For a significant portion of this space, a subspace, we can describe actions in English sentence: “Eve gives Adam a forbidden fruit(at any time).” But we do not unnecessarily restrict us to these at this stage. Sets have interesting but practical operators that we use to model other aspects of our world, including: membership and subset relations, union and disjunction operators, etc. A hope is that using sets of actions we can both cover a lot of ground in representing our real world, and we leverage our innate understanding of these concepts to interpret the matters of Actions.

This framing gives us an immediate idea to compare the size of action spaces. Suppose there is an Action Space that represent the actions permitted by the U.S. constitution L. Now, we can also have the action space specified by the United State Code(USC). We can very safely demand that when interpreted their action spaces  . And we say that the Constitution of the United States of America grants strictly more freedom than the USC. The set of actions permitted by the prior is a strict superset of those permitted by the latter. More actions means more rights, liberties and freedoms. Fewer actions means more constrained and fewer choices. Strictly more free is a partial ordering of all action spaces. In a less strict sense of freedom, we can also compare cardinalities of two action spaces. But clearly this ordering is not very useful: have all the rights to sneeze in various poses is not nearly as important as the right to take a sip of water. Of course that can too be ameliorated with utilitarian’s individual utility function or the social welfare function, and other such attempts so as to produce a useful ordering of preference over action spaces.

. And we say that the Constitution of the United States of America grants strictly more freedom than the USC. The set of actions permitted by the prior is a strict superset of those permitted by the latter. More actions means more rights, liberties and freedoms. Fewer actions means more constrained and fewer choices. Strictly more free is a partial ordering of all action spaces. In a less strict sense of freedom, we can also compare cardinalities of two action spaces. But clearly this ordering is not very useful: have all the rights to sneeze in various poses is not nearly as important as the right to take a sip of water. Of course that can too be ameliorated with utilitarian’s individual utility function or the social welfare function, and other such attempts so as to produce a useful ordering of preference over action spaces.

Having considered many perspectives on permissibility and selection of actions, and considering conservative believes about our physical universe and all that we could possibly be concerned with, we have come to designate an API with which thinking and controlling systems may interact with our faculties that deal with rules of law and right and wrong. We suspend our fear of making a homunculus argument as we do not say we have found or made such modules of this artificial intelligence, but merely that we want to separate these concerns to reduce the complexity of reasoning. The separation is not physical, all the thinking could be produced on the same gray matter or CPU. The interface can also be defined implicitly, for visualization, consider looking at a hyperplane through which these two separate functions connect.

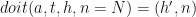

For the purpose of characterizing whether an action is permitted by a determining agent or subcomponent  , we ask that a permission function $latest P_E$ to be accessible.

, we ask that a permission function $latest P_E$ to be accessible.

![P_E(a, t, H, n=N) -> [permissible|impermissible]](https://s0.wp.com/latex.php?latex=P_E%28a%2C+t%2C+H%2C+n%3DN%29+-%3E+%5Bpermissible%7Cimpermissible%5D+&bg=ffffff&fg=111111&s=0&c=20201002)

The parameters are typed:

is an action of the action space

is an action of the action space is a set of timestamps in question.

is a set of timestamps in question.

- $latex T” is a predesignated time indexing which is a set of objects known as timestamps. It is is totally ordered. For convenience we also include the open and closed contiguous sets of timestamp called intervals or ranges using ‘[]()’ symbols. We use the symbol < to mean before, > to mean after and = to mean at the same time.

- time

can be a single timestamp or a set of timestamps. The function is polymorphic.

can be a single timestamp or a set of timestamps. The function is polymorphic.

- The type of time parameters should be be inferred from context if ambiguous: happening at “a time

” means a single timestamp, “happening at time/times

” means a single timestamp, “happening at time/times  ” means occurring for all time in set

” means occurring for all time in set  .

.

- Often

is specified to be the real numbers or integers. In this case a reference must be set for the time 0, as well as scale explaining what duration of 1 means in the physical world.

is specified to be the real numbers or integers. In this case a reference must be set for the time 0, as well as scale explaining what duration of 1 means in the physical world.

is the whole history of the world up to

is the whole history of the world up to  .

.

- History has, among other information, the timestamp of now $H_n$ which is the maximal time about which we have information through

. Calling it now is more positive than the end of history.

. Calling it now is more positive than the end of history.

- Regarding performed actions,

is a log of actions that have been taken each with timestamp of when they were taken. We use a convenience expression

is a log of actions that have been taken each with timestamp of when they were taken. We use a convenience expression  to check if an action was reportedly taken in

to check if an action was reportedly taken in  at time

at time  . Not specifying

. Not specifying  asks if the action was ever taken.

asks if the action was ever taken.  is an injective function. An action is taken or not taken, it cannot be unknown.

is an injective function. An action is taken or not taken, it cannot be unknown.

is the nature of the world. It may contain matter such as the laws of physics, existence of god, etc. Since we care most about the nature that we are in, by default this parameter is specified as the nature of our world. We should be able to query for information such as number:

is the nature of the world. It may contain matter such as the laws of physics, existence of god, etc. Since we care most about the nature that we are in, by default this parameter is specified as the nature of our world. We should be able to query for information such as number:  , e, c,

, e, c,  etc.

etc.

therefore yields the result that we use to decide whether an action is permissible or not under some system of determination for propriety and preference. The answer, as given by

therefore yields the result that we use to decide whether an action is permissible or not under some system of determination for propriety and preference. The answer, as given by  , is E’s answer at time

, is E’s answer at time  . An agent, upon receiving the permissible result from

. An agent, upon receiving the permissible result from  will understand that the action they asked about is permitted at the time in question given history of the world leading up to time

will understand that the action they asked about is permitted at the time in question given history of the world leading up to time  , and our nature.

, and our nature.

….The E is member of world and accessible as part of nature. We could also imagine historical E’s that are result of history: made computer, wrote programs, program decides…..

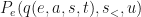

In considering the permissibility of actions we should also for functional purposes suppose the existence of the doit function.  . All that doit does is that it instantaneously adds the action to history and nature at time $latext t$. and reports the results of that insertion. When specified, a natural action is one which does not change nature:

. All that doit does is that it instantaneously adds the action to history and nature at time $latext t$. and reports the results of that insertion. When specified, a natural action is one which does not change nature:  and a supernatural action is one that changes nature:

and a supernatural action is one that changes nature:  .

.

Two actions  are homopotent if

are homopotent if  . This equivalence relation creates equivalent classes of actions. Such classes exist even the Natural language descriptions can have many descriptions of the exactly same action. We will prefix homopotency with historic and natural for equivalence that match only history and only nature respectively.

. This equivalence relation creates equivalent classes of actions. Such classes exist even the Natural language descriptions can have many descriptions of the exactly same action. We will prefix homopotency with historic and natural for equivalence that match only history and only nature respectively.

For convenience of notation, we can query nature and history for, among other things, the deed of past actions:

We are conscientious of many other potential problems of our present endeavors. Mathematicians has given us many concerning thoughts about sets of things. One example of a problem with these innings is that most of our computational machinery have known limitation that terminability of a function is unknowable—eg the Halting Problem.

In practical implementation the function may produce a response that is either permissible nor impermissible. If we have to wait for ever, then this function is not useful. If we do not know whether it terminates or not, then we do not know if we can use the result or not. Of course, Software Engineers have long worked around this issue by creating time-box around functions. Each function evaluation is surrounded by machinery that will wait patiently for a result, but if some preset time box is exceeded, the efforts to evaluate said function is suspended and the invoking agent is informed that the function did not function as expected. Since time pass as surely as we can time it, this time boxing wrapper approach guarantees us that we can implement a function with this signature:

![P_E(a, t, H, n=N) -> [permissible|impermissible|indiscernible]](https://s0.wp.com/latex.php?latex=P_E%28a%2C+t%2C+H%2C+n%3DN%29+-%3E+%5Bpermissible%7Cimpermissible%7Cindiscernible%5D+&bg=ffffff&fg=111111&s=0&c=20201002)

Users of our API are warned and required to handle the case when such a component fails to function. Such demand is not unreasonable as there are many such safety implements in most modern artificial computational systems. The result of indiscernible expresses no opinion regarding the action. The user of this API may choose conventions on how to react to the result. A information security implementation may choose to be conservative by reacting to indiscernible as if the answer is impermissible to ensure security. Where as human legal system may choose to be liberal and interpret indiscernible reaction as permissible granting maximal freedom when in doubt.

Another oft-used software engineering safety technique is that of rate limiting. The provider of  API may choose to rate limit how much any single agent may query the API. Rate limiting helps to mitigate finial of service(DOS) attacks on the permissions system. In reality, this rate limit is enforced by our limited implementations. In theory, a rate limit on API invocation allows to analyze the ability of a real agent to follow the directions of a permission function under realistic constraints. Rate limits can be expressed as a limit on requests can be made within any contiguous interval of a certain set period of time (ie queries per second (qps)), or it may be a rough restriction in the form of interval between requests, among many other choices.

API may choose to rate limit how much any single agent may query the API. Rate limiting helps to mitigate finial of service(DOS) attacks on the permissions system. In reality, this rate limit is enforced by our limited implementations. In theory, a rate limit on API invocation allows to analyze the ability of a real agent to follow the directions of a permission function under realistic constraints. Rate limits can be expressed as a limit on requests can be made within any contiguous interval of a certain set period of time (ie queries per second (qps)), or it may be a rough restriction in the form of interval between requests, among many other choices.

For a third problematic example, we shall eponymously name it the quit-right problem. It is a shady imitator of the Russel Paradox. The problem is self explanatory: Are quit-right actions members of our action space? Can one consider the right to give up a right? If so, can we quit all quit-rights rights? Can we quit a quit right the action itself?

Legal theory has a convenient solution to this problem. In legal arrangements, one can make something called a default rule and another that is called mandatory rule. A default rule applies if there is no forceful contract or declaration to its contrary. Mandatory rules, on the other hand, are those rules that cannot be overwritten irrespective of contracting or forceful declarations. Certainly quit right is an action we can imagine to be part of a legal action space, but a legal action space will not contain quit right actions for actions that are mandatorily protected. Some commonplace examples are the potency of Nondisclosure Agreements (NDA) in the rules of law. In this case a natural person or other legal entities may contract away their right of speech and other expressions—they quit their right of speech and freedom of expression. However, no matter if you sign with a in $2^10000000$ bits of cryptographic signature carved into stone, you can not sign away your life to be taken by another individual. It will always be called into question whether that other individual is responsible for advertently or inadvertently cause your loss of life irrespective of your renunciation thereof. The force of such system is infinite, the person may not change his right to change his right to life, he may not give himself permission to give himself permission to contract or declare away his life, and is on and so forth.

Now, those are a subject itself quitting its own right. Again using a easy target of human life, the action space still contain actions such as the state killing you. Under some circumstance states maintain the right to kill you in its action space for purpose of capital punishment. The American government actually also has the right to modify its own right regarding capital punishment within the confines of its constitution.

But what does the quit-right action look like in the action space? Let’s for simplicity of expression designate a macro $q(e, a, s, t=\infty)$ to mean the action:

quit the right to take action  in the permissibility determining system

in the permissibility determining system  on all such times on or after timestamp

on all such times on or after timestamp  and before time

and before time  .

.

The meaning of macro

If  is permissible, and if

is permissible, and if  was successively taken in history

was successively taken in history  then

then  returns impermissible.

returns impermissible.

- Time is a totally ordered set of timestamps. These corresponds to wall clock time in our world. The set has membership as well as open and closed interval as.

- Action Space actions has success and failure return codes.

- doit succeeds only when action is permitted.

- doit returns a history. Suppose we can query that history for whether an action was taken in time range. The behavior of doit is then definable on the function’s input and output.

- other agents can be invoking doit as well, it does not affect present agent…$

- permit, forbid only when the stated modifications to subactionspace is permitted

The resignation to these rights are targeted for a specific permission function  to allow us to perform activities permitted by one system and disallowed by another, e.g. law and conscience, rationality and greed, etc. Since we have not introduced macro and action variables or even functions within the action space, we skirt issues like writing a macro that when expanded produces

to allow us to perform activities permitted by one system and disallowed by another, e.g. law and conscience, rationality and greed, etc. Since we have not introduced macro and action variables or even functions within the action space, we skirt issues like writing a macro that when expanded produces  ad infinitum. But even when that is enabled, it will not be a problem because for uninterpretable actions we have a convenient indiscernible result to resort to when we receive obnoxious or pathological questions and actions that we can certainly deem unreasonable, irrelevant, or useless.

ad infinitum. But even when that is enabled, it will not be a problem because for uninterpretable actions we have a convenient indiscernible result to resort to when we receive obnoxious or pathological questions and actions that we can certainly deem unreasonable, irrelevant, or useless.

Now then, we may say that if an agent has taken an action  then we expect

then we expect  to return impermissible

to return impermissible  .

.

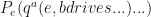

So far we have not distinguished actors(subjects) and objects of action. But it does not hinder our efforts. An action space built constructively using verbs and nouns into a transitive action space. We can also explore by building increasingly more complex action space, for example by increasing valency of verbs used to construct a action space. In such spaces, the action passed to the quit-right macro may contain a subject not covariant with the object. In such a situations, the action  in which actor

in which actor  quits an action for

quits an action for  . The DMV(

. The DMV( ), for example, has the right to take an action that forbids a person(

), for example, has the right to take an action that forbids a person( ) from driving according to traffic law(

) from driving according to traffic law( ) according to

) according to  : A motorist has the API to ask the question

: A motorist has the API to ask the question  and receive an affirmative answer of permissible.

and receive an affirmative answer of permissible.

More to come…