There’s this idea in Deep Learning that Neural Networks are universal function approximates. They can approximate any function you can provide data for.

It has confounded me for a long time exactly how it does this for continuous valued output, but recently, through the grapevines that is the Deep Learning community, I finally discovered one answer to this question.

Consider some deep neural network taking in  and producing some kind of penultimate layer of activations,

and producing some kind of penultimate layer of activations,  . We want to write a formula for producing a

. We want to write a formula for producing a  that approximates

that approximates  .

.

Oh boy, who are we kidding, let’s just drop down to tensorflow code…

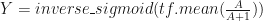

You want to do

Being careful, of course, to calculate the pooling not for the batch but for each input and not to double sigmoid-activate  , but the last activation must be sigmoid-compatible. Note since sigmoid produces numbers between zero and one inclusive, so the mean, or any convex combination, of a bunch of such numbers can also exactly span that range, suitable for input to the

, but the last activation must be sigmoid-compatible. Note since sigmoid produces numbers between zero and one inclusive, so the mean, or any convex combination, of a bunch of such numbers can also exactly span that range, suitable for input to the  . And of course if you need to,

. And of course if you need to,  could have been activated with the likes of tf.exp or tf.square and then filtered through the tf.sigmoid

could have been activated with the likes of tf.exp or tf.square and then filtered through the tf.sigmoid

For example, if you think  ultimately grows with

ultimately grows with  , and you have already made sure

, and you have already made sure  is positive, then you can use the following by simplifying out the exponential and compute

is positive, then you can use the following by simplifying out the exponential and compute

The  –

– pair can also be replaced with other bounded activations like the tanh or

pair can also be replaced with other bounded activations like the tanh or ![\frac{x}{\sqrt[1/k]{1+x^k}}](https://s0.wp.com/latex.php?latex=%5Cfrac%7Bx%7D%7B%5Csqrt%5B1%2Fk%5D%7B1%2Bx%5Ek%7D%7D&bg=ffffff&fg=111111&s=0&c=20201002) and their respective closed-form inverses.It can also be replaced with unbounded constricting activations such as

and their respective closed-form inverses.It can also be replaced with unbounded constricting activations such as  and

and  pair for a chosen whole numbers

pair for a chosen whole numbers  .

.

Tada!

This solves the problems of your deep neural network needing constricting nonlinearities like the sigmoids, your need to produce a continuous output that may grow at non-linear rates relative to activations, your limited computational resources, and your having a lucid hunch as to how they are related.

Hopefully this helps you and saves significant amount of brain activity and experimentation. Your problem will probably need a special architecture using a configuration of this pooling later.

P.s. the use of sigmoidal functions seems beckons to a probabilistic interpretation. The desigmoid, that’s inverse sigmoid, can be interpreted as a lookup from the CDF of a random variable, the value at which it achieves that accumulation. Essentially, in the most basic configuration, this regressor uses each element of A in the penultimate layer to support(or to reflect evidence that) that the desire  is larger. In a human brain, this positive-only thinking seem overly restrictive. What if we have a field of

is larger. In a human brain, this positive-only thinking seem overly restrictive. What if we have a field of  is a positive signal that strictly means smaller

is a positive signal that strictly means smaller  ? One way is to use a second FCL to remove effect of one sigmoid from another. A second intuitive idea would be to do the following:

? One way is to use a second FCL to remove effect of one sigmoid from another. A second intuitive idea would be to do the following:

In one step, this regressor can consider both support for larger and for smaller value of Y.

P.p.s. Want to also put in a plug to our wonderful democracy. The computation of mean is explicitly mixing votes of each activation in the penultimate layer equally–each neuron gets an equal vote as to the result. Politics aside, and in addition to convex combinations, all other range preserving combination are fair game–e.g. geometric mean, softmax,etc. depending on the relationship between  and

and  and the network that produced

and the network that produced  .

.

little, while it may lead to increased

and consequently a larger piece of the cow(

).