There are a lot of ways to think about what is good and what is not good. This question has been of interest to humans for a long time. In an effort to simplify the considerations, we should at least perform a time based separation. We should consider the question of “What is good” at different times in the life of an autonomous agent.

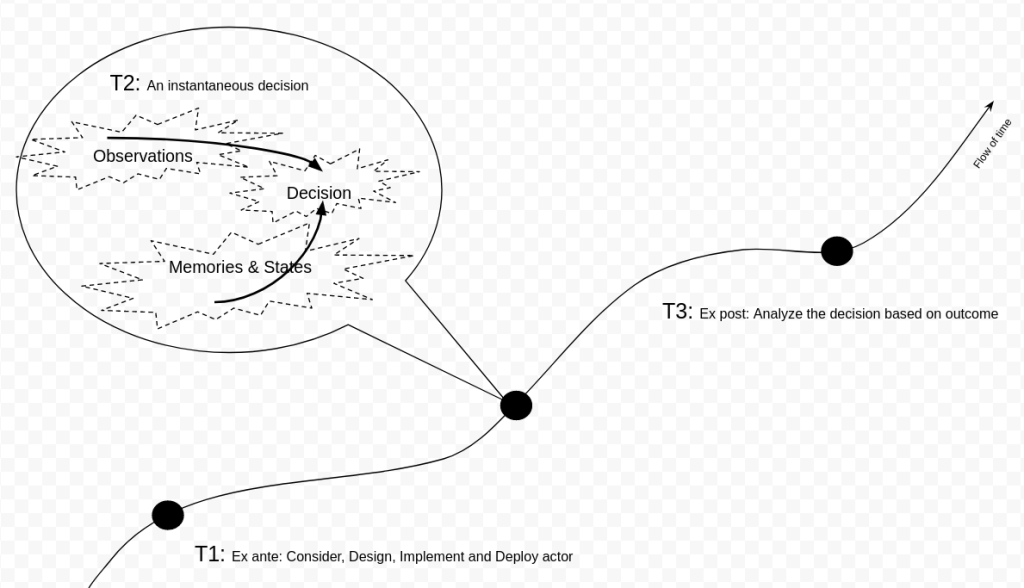

In the picture we have illustrated the flow of time with three instants in time highlighted by round dots. T1 represents a time at which we have the leisure of analyzing what might happen, and correspondingly producing a good agent to make decisions. T3 is a time after decision was made by the agent. At this time more observation of our physical world has become available to us. The consequent benefits or injury caused by said decision.

Let’s first look at time T2. At this moment, the subject of our discussion, an automated agent, enhanced by GCI and other tech, has to make a decision. It does not matter if the decision making is a discrete process that produces decisions at intervals or if it is a continuous process producing a decision at every instant in time. All that matters is that at this moment in time, there is some set of observations and some memories and states the agent retained from time that has past. The decision being made is entirely a function of these two inputs. (Lets model a true RNG as part of observation–we observe a bit from an external source of entropy). This type of abstraction is very useful for programming as well as simplify a vast universe of possibilities so that we may perform informative mathematical analysis.

At time T1, we may choose one of many approaches to implement the agent. The agent may consider a snapshot observation at T2 or some aggregated information in time between T1 and T2. We may, for example, simulate all possible outcomes, and try to device an agent that can make the decision that work the best on average. Other metrics and objectives such as minimax may be used.(In multiagent extension, one may also consider using the QIM) We may for another example consider the regrets we may feel at T3 and focus on minimizing that consequence while making the agent (This is to say that at T3 we consider, additionally, all that might be observed to happen after T1). The one stipulation here is that at T1 we have to firstly specify what the observations and memories and states are. Without confining ourselves to these boundaries we cannot make an agent and consider its goodness. In reality, the function computation is not instantaneous, and for practical implementation purposes, we also stipulate bounded time in addition to bounded power of observation and GCI-mental capacity.

By isolating these three instants in time, we can clearly see that there is no question of good or bad for the action of the agent as it has no autonomy. The agent does not bear the weight of responsibility for acting well. For even its programming is decided non-autonomously ahead of time at T1. At T1, the entity responsible for the agent’s goodness may be forced to place itself within the restrictions of the agent (observations and GCI-mind) In a supervised setting the responsible entity may provide supervision by answering the question “What is the good decision in this situation at T2,” or “How do I make a good decision known these things at T2.” Semi-supervised setting may ask “What other decision is this decision like?”. Ultimately the responsible entity, to be good themselves, must do his best to ensure the agent makes the best best possible decision at T2.

The impact of that decision will be felt at T3. At all T3’s, nothing can be done about what happened at T1 and T2. All that remains is to celebrate our gains and morn our losses.

The conclusion seems to be that GCI is not about bad machines. The analysis of algorithmic bias, the concerns for all kinds of bias in the training data, all of these efforts people are putting in to making GCI is not about GCI itself. It is really about the GCI scientists, engineers, users and policy makers. We’re really talking about how we can be ourselves better at T1. Every complaint that GCI will be so terrible, maybe even end the world, they are complaints about ourselves at T1, that we have not made sufficient efforts or progress for everyone to feel good about T2 and T3. For me, personally, I think we should continue to better ourselves. WDYT?